Nuclear fusion simulation to pioneer transition to exascale supercomputers

The EU Commission is providing 2.14 million euros in funding to take the GENE simulation code developed at the Max Planck Institute for Plasma Physics (IPP) to a new level. By using exascale supercomputers, it enable digital twins of nuclear fusion experiments such as ITER in future. IPP, the Max Planck Computing and Data Facility (MPCDF) and the Technical University of Munich will work together on the project.

Plasma physics has been one of the most important drivers for the further development of supercomputers since the 1960s. This is because plasmas are highly complex entities that cannot be detected with simple physical models. Almost the entire universe consists of such plasmas, which are extremely dynamic mixtures of predominantly charged particles (ions and electrons). Our sun and all other stars generate energy from this through nuclear fusion. Researchers need supercomputers to make this process usable on earth and to better understand the processes in the universe.

The Max Planck Computing and Data Facility (MPCDF) in Garching was launched in 1960 and the National Energy Research Supercomputer Center (NERSC) in the USA in 1974 as tools for plasma research. When the US supercomputer Roadrunner at Los Alamos National Laboratory was the first to break the petascale barrier in 2009 (i.e. it was able to perform more than 1015 = one quadrillion computing operations per second), a plasma simulation code called VPIC played an important role.

On the way to exascale supercomputers

In the forthcoming leap in high-performance computing, plasma modelling will again be among the pioneering applications: It is about the launch of the first exascale computers in Europe. By definition, these can perform at least one trillion computing operations per second (1 quintillion = 1018, written out as 1 with 18 zeros). From 2024 onwards, there will be supercomputers in Europe that exceed this threshold. The European Commission is providing a total of more than seven million euros to prepare four simulation codes for plasmas for the exascale era.

A total of 2.14 million euros of the funding sum will go to the Garching site near Munich (the German Federal Ministry of Education and Research is providing half of the funding amount): The Max Planck Institute for Plasma Physics (IPP), the Max Planck Computing and Data Facility (MPCDF) and the Technical University of Munich (Department of Computer Science) will use it to jointly raise the GENE code to a new level from January 2023. GENE (Gyrokinetic Electromagnetic Numerical Experiment) is an open-source code that is used worldwide, especially for research into nuclear fusion plasmas. It is therefore used wherever researchers are working on generating energy on earth, following the example of the sun.

Predicting fusion experiments for the first time

“With today’s capabilities, GENE can already explain the physical causes of experimental results that we achieve, for example, with our fusion experiment ASDEX Upgrade at IPP,” Prof. Frank Jenko explained, Head of Tokamak Theory at IPP in Garching. He wrote the first version of GENE in 1999 and has been steadily developing the code with international teams ever since. “With an exascale version of GENE, we are now taking the step from interpretation to prediction of experiments. We want to create a virtual fusion plasma, the digital twin of a real plant, so to speak,” Jenko explained the goal.

He and his cooperation partners are also involved with ITER, the largest fusion experiment in the world, which is currently being built in Cadarache in southern France. ITER is supposed to generate ten times more fusion power than the amount of heating power that needs to be put into it. The facility is designed as a preliminary stage of a future fusion power plant that will then actually supply electricity. To achieve this goal, scientists will have to adjust a variety of experimental parameters at ITER to find the most favourable combination, which would probably take many years through trial and error alone. An optimised GENE code should significantly speed up fusion research. With it, scientists will be able to calculate configurations in advance and rule out many others in advance.

Why is the switch to exascale computers so complex?

“Unfortunately, it is not enough to simply transfer the previous programmes to the new computers,” Prof. Jenko said. “Performance leaps in new supercomputers are largely made possible by new hardware architectures today. Only if we adapt our codes to this can we really calculate faster.”

Prof. Jenko illustrates the task with the processing of files in the analogue world. “If the task is to evaluate ten thematically closed file folders, ten people can probably do it ten times faster than one. But if suddenly 10,000 people are available for the ten folders, that only brings something if I completely reorganise and divide the work,” Prof. Jenko explained. It becomes even more complicated when the 10,000 people have different skills that need to be used optimally. This is also the case if the evaluation of some folders depends on the results obtained from other folders.

The researchers face comparable tasks in the transition to exascale computers: “Today’s supercomputers achieve their performance increase by handling more and more computing tasks in parallel and by increasingly using graphics processors in addition to classical processors, both of which, however, have different strengths,” says Jenko. To prepare the GENE code for future computer generations, his team therefore includes experts who are involved in the design of future hardware generations. Co-design is the name given to this collaboration in the industry.

In the end, not only fusion research will benefit from the project: “With the GENE code, we are pioneers in the transition to exascale computers,” Prof. Jenko stated. “What we learn in the process will also help developers of other programmes.”

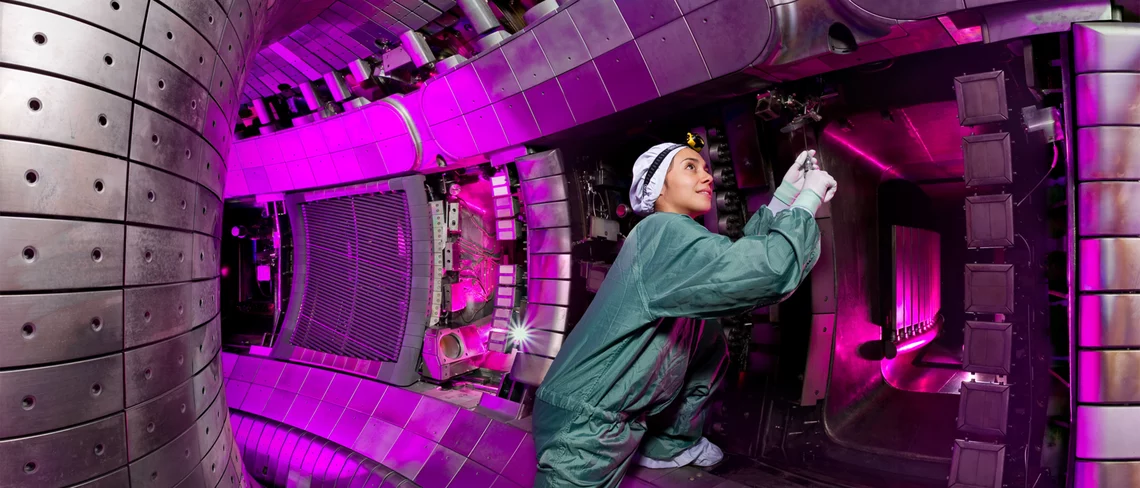

Foto: IPP.MPG.DE